Modularity dividends - Why standardisation beats customisation in IT

An Oxford study reveals that IT projects have uniquely infinite risk and the data suggests why. I want to propose another reason standardisation isn't limiting but the key to reliable success.

Buckle up, we have a nerdy one. After one of my last articles an avid reader of the blog suggested I read up on a fella called Bent Flyvbjerg and his colleagues at Oxford University. They recently published a paper titled, ‘The Uniqueness of IT Cost Risk: A Cross-Group Comparison of 23 Project Types’. Their intention was to determine if IT projects are not different from other projects in terms of cost risk. Their thesis, as they say, was falsified.

Their findings shed some light on why big IT projects tend to fail quite spectacularly, and they stumbled upon a pattern that extends past software - one that I’ve wanted to write about for some time and so here’s my excuse.

The researchers compared cost overruns across 23 different project types (IT, aerospace, oil and gas, bridges, buildings, rail, etc). They looked at data from 11,011 IT projects that lasted over a year, with a total worth $4.64 trillion.

The whole paper is worth a read if you’re into this sort of thing - what they found challenges a lot of assumptions about why projects fail. They found, as any PdM might tell you, that IT projects are uniquely dangerous and come with infinite and unpredictable cost risks.

Buried in the paper’s data is another pattern however - one that I intend to put on the end of a stick (or a carrot) and bring to a lot of meetings. I’ll do some context setting and then explain an hypothesis I have of a ‘modularity dividend’.

IT projects have infinite risk

The study's primary finding is that IT projects are statistically more dangerous than any other project type, even compared to something like nuclear waste storage (in terms of cost).

Doing an analysis of cost overruns, they calculated the ‘alpha parameter’ for each project type - a measure of how extreme and unpredictable the cost overruns can be.

IT projects had an alpha of 0.92, the only project type with a value below 1.0 which indicates an infinite mean, variance, and skewness - i.e conventional statistical analysis and risk predicting methods won’t work. They explain that ‘conventional prediction, by which we understand forecasting based on the mean, is impossible’ (page 7, Table 3).

While 60% of the IT projects they looked at came in on (or under) budget, 40% ran over, and 18.26% of them exploded with cost overruns exceeding 50%. These disaster projects averaged 5.53 times their original budget, or 453% over. (page 6, Table 2).

Most projects (60%) perform well enough, but the ones that go over, go over and so dominate the risk profile.

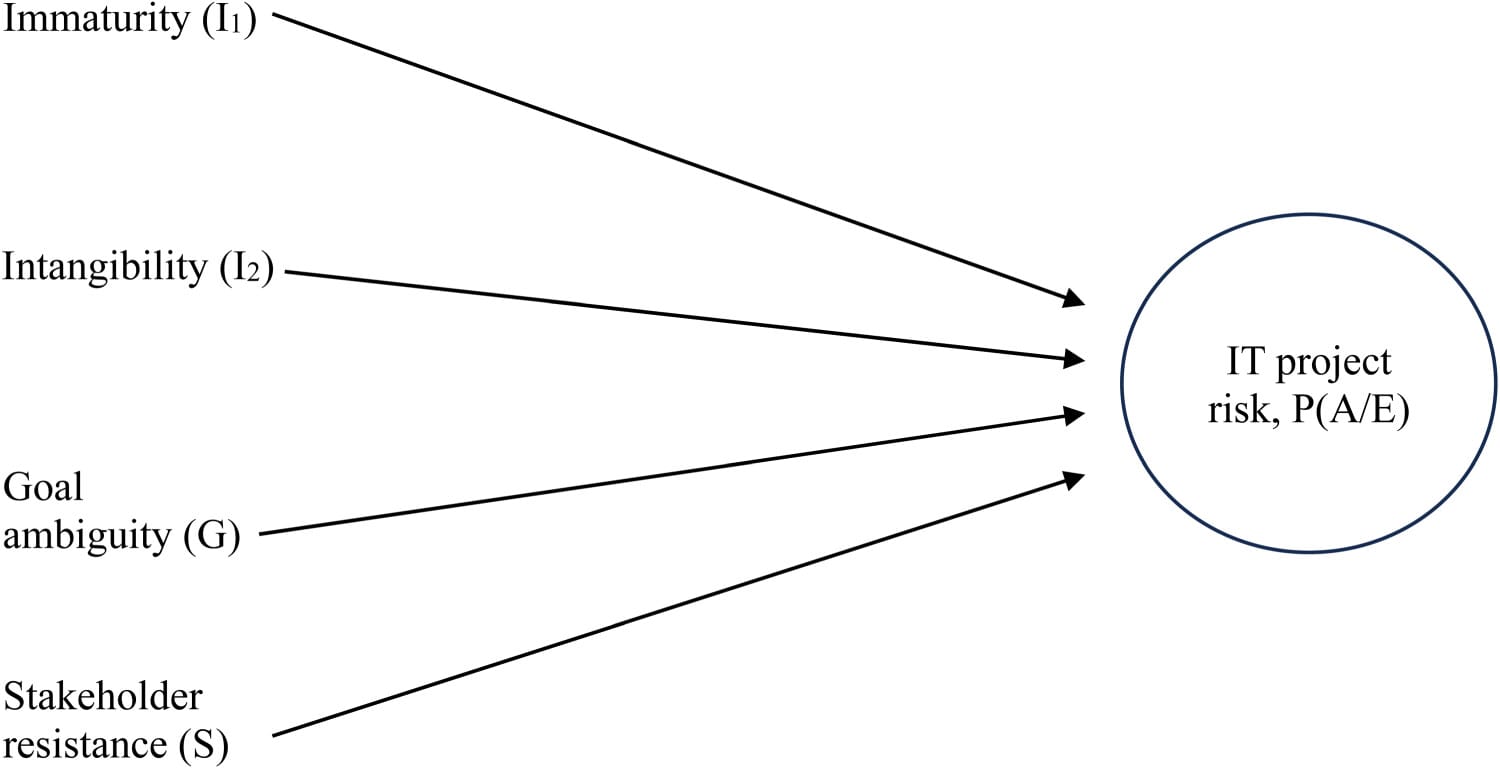

The authors identified four common factors contributing to IT's unique risk: immaturity as a discipline, intangibility of software, goal ambiguity, and stakeholder resistance (pages 2-3). They argue that unlike other project types IT projects suffer from all four simultaneously.

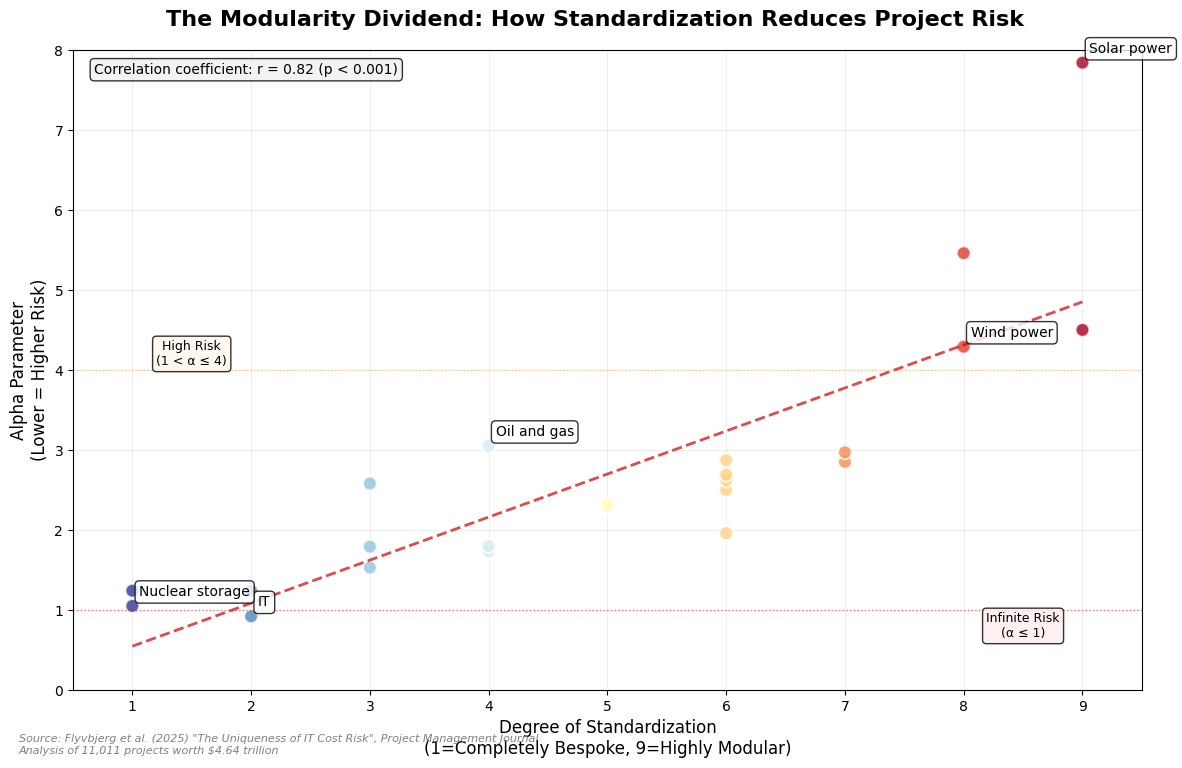

Examining the alpha values across all 23 project types reveals a correlation that the authors mention but only look at in the discussion section of the paper. The degree to which ‘bespokeness’ affects the risk of the project. They didn’t go into it in the paper because there isn’t any real data to look at in studying project bespokeness but I want to talk about the relationship between standardisation and risk predictability anyway.

Standardisation helps all industries

These are the projects with the thinnest tails, i.e lowest risk, i.e highest alpha parameters:

- Solar power: α = 7.836

- Energy transmission: α = 5.463

- Wind power: α = 4.289

- Nuclear decommissioning: α = 4.504

These are highly standardised industries. Solar installations use modular panels with standardised mounting systems. Wind farms deploy factory-built turbines with proven designs. Energy transmission relies on standardised grid components and protocols. Nuclear decommissioning, dealing with radioactive materials, follows rigorously standardised procedures developed over decades.

Contrast this with the highest-risk projects:

- IT: α = 0.92

- Nuclear storage: α = 1.047

- Defense: α = 1.235

- Olympics: α = 1.237

And you can imagine each has a more fundamentally bespoke approach. From my own experience I’m confident saying too many IT implementations, or parts of IT implementations are treated as unique. Nuclear storage facilities are one-off designs for specific waste types. Defense projects create custom solutions to try to stay ahead in the race. And the Olympics are celebrations of uniqueness by definition, changing every time.

The difference is nuclear storage, defense, and olympic projects all have a clear and fundamental need to innovate and be bespoke. I would argue that this is absolutely not the case for IT.

The pattern extends throughout the middle ranges. Oil and gas projects (α = 3.05) involve custom drilling operations for unique geological conditions. Mining projects (α = 2.58) require bespoke extraction methods for each site. Buildings (α = 1.73) suffer from the classic problem of stakeholder-driven customisation - every client wants something different.

A visualisation of my hypothesis

To show you this idea I created a rough ‘standardisation score’ for each industry based on general knowledge (nothing at all) - ranking solar and wind high (8-9) for their modularity, and IT and defense low (1-2) for their custom approaches. This scoring is very subjective and not nearly as rigorous as the paper - it's just an attempt to visualize the pattern I’m suggesting exists.

IT projects occupy the most dangerous position - lowest standardisation (2/10) with infinite risk (α = 0.92) - while solar power achieves the opposite extreme with high modularity (9/10) and predictable outcomes (α = 7.84).

Measuring the modularity dividend

The data shows what we might call the ‘modularity dividend’ - the measurable risk reduction that comes from standardised approaches.

The dividend is big. The authors observed that there is no overlap between the 95% confidence intervals between the two extremes in alpha.

Solar projects, with their standard panels and installation procedures, are nearly nine times more predictable than IT projects (7.84 vs 0.92 alpha). And again, within similar domains, the pattern holds: nuclear decommissioning (standardised procedures, α = 4.50) vastly outperforms nuclear construction (bespoke designs, α = 1.53).

The authors note this pattern when discussing the Apple App Store specifically:

‘Apps on the App Store follow a modular design made mandatory by Apple (...) This has de-risked the App Store, unlike conventional bespoke IT’ (page 11). Supporting the idea that standardisation reduces risk but also showing that it can exist in IT.

Why this matters for IT

This could have real implications for how we think about project management in general. The data suggests that drive toward customisation, often considered a competitive advantage, is actually more likely to hurt value.

Industries that successfully standardised approaches didn't just reduce costs, they changed their risk profiles. Solar power transformed from an experimental technology with unpredictable costs into the most reliable project type in the paper’s dataset. Wind power achieved similar results through factory-built standardisation. Both are newer industries even than IT.

The IT industry, at least in my experience, tends toward a culture of custom solutions coming from a mentality of ‘we can do it better’ or, ‘my problem is special’. But every ‘special requirement’ and ‘custom solution’ pushes projects toward the unpredictable end of the risk spectrum.

What we can learn

We can stop treating every project as special. When I talk to start ups I spend a lot of time talking about ‘solved problems’, especially when it comes to technology and building apps. With only very specific and usually cutting edge exceptions it’s all been done before. Let’s just do what’s worked before and standardise approaches.

Before adding a custom feature or bespoke requirement, ask what's the cost of not using a standard approach?

Embrace boring solutions. Solar and wind power became reliable not through innovation, but through standardisation. The most successful industries took exciting, experimental technologies and made them boring through modularity. Whatever it is that you’re building, find the unique selling point and innovate or iterate on that, make everything else as easy and boring as possible.

Question the customisation premium. We've internalised that bespoke solutions provide competitive advantages. Doing things our own way gives us more flexibility or more value. But the data suggests the opposite - customisation correlates with more project disasters. The companies winning aren't those with the most flexible solutions, but those with the most predictable ones.

Learn from adjacent industries. If nuclear decommissioning can achieve excellent risk performance through standardised procedures, what excuse does software have?

Of course, it’s important to remember this hypothesis is not part of the paper. The authors identified four factors: immaturity as a discipline, intangibility of software, goal ambiguity, and stakeholder resistance (pages 2-3). And standardisation was just a suggested other. But is one I think is quite important too.